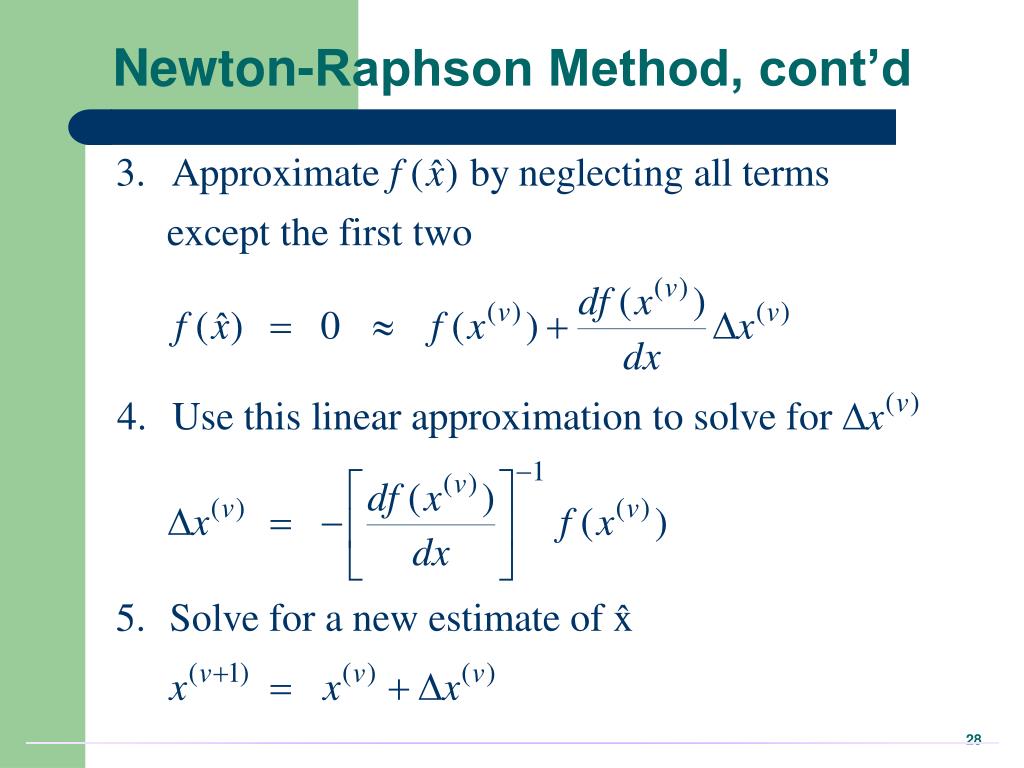

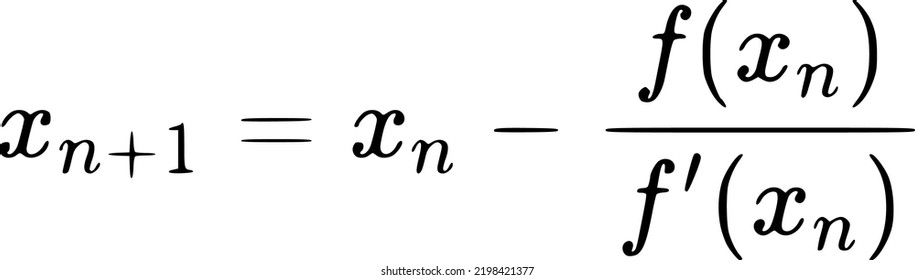

As an aside, when Newton’s method is applied to maximize the logistic regression log likelihood function \(\ell(\theta)\), the resulting method is also called Fisher scoring.The computation cost with Newton’s method in dealing with a \(10,000 \times 10,000\) matrix tends to be prohibitive. Use gradient descent if you have a very large number of parameters, say 10,000 parameters.Use Newton’s method if you have a small number of parameters (i.e., where the computational cost per iteration is manageable), say 10 - 50 parameters because you’ll converge in \(\sim\) 10 - 15 iterations.If \(\theta\) is 100,000-dimensional, each iteration of Newton’s method would require computing a \(100,000 \times 100,000\) matrix and inverting that, which is very compute intensive.If \(\theta\) is 10-dimensional, this involves inverting a \(10 \times 10\) matrix, which is tractable.

However, in case of very high-dimensional problems, where \(\theta\) is a high-dimensional vector, each iteration/step of Newton’s can, however, be more expensive than one iteration of gradient descent, since it requires finding and inverting an \(n \times n\) Hessian but so long as \(n\) is not too large, it is usually much faster overall.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed